Contributions

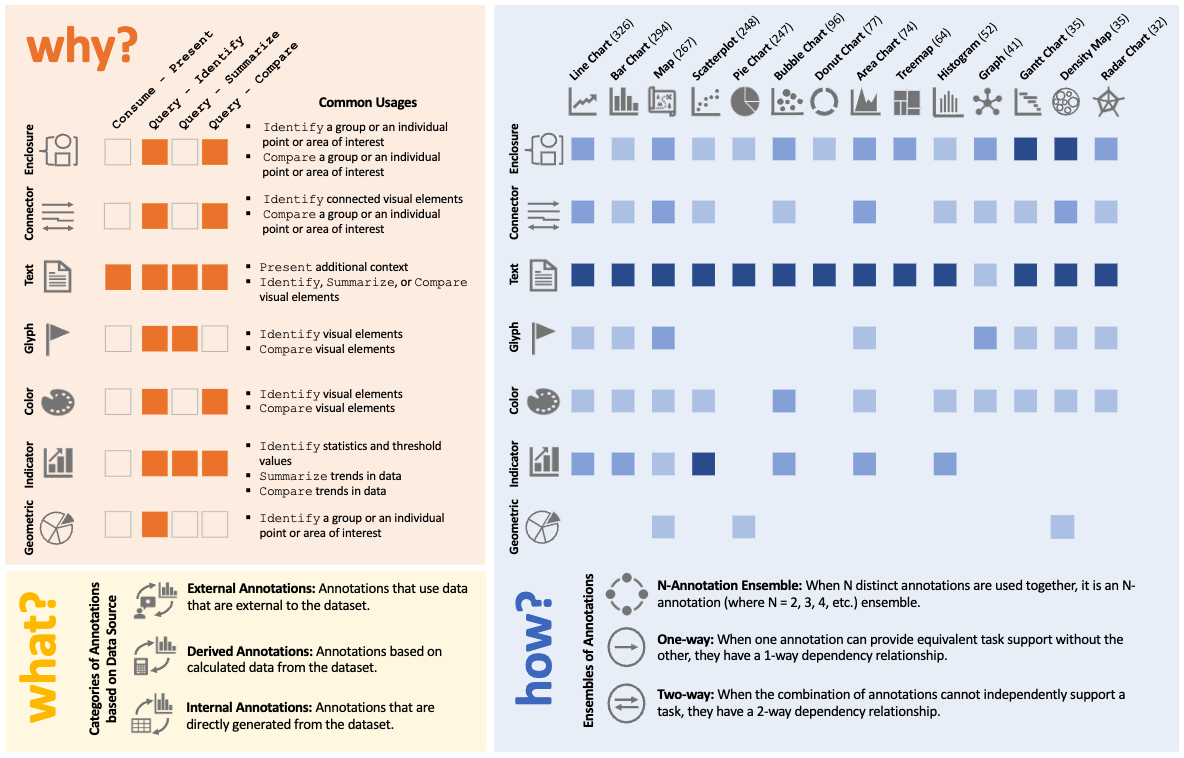

A corpus-based qualitative analysis of 1,888 annotated charts across 14 chart types, yielding a seven-type taxonomy and a three-dimensional design space that structures annotation decisions around task intent, visual form, and data provenance.

Key Findings

The paper’s core result is a practice-grounded framework for annotation design. From 1,888 real-world charts, it derives a taxonomy and a structured design space that helps researchers and practitioners reason about annotation decisions across chart contexts.

Why, How, and What

The design space structures annotation decisions along three dimensions: Why — the analytic task the annotation supports; How — the visual type and ensemble configuration; and What — the provenance of annotation content. Each dimension is independently selectable yet jointly determines annotation appropriateness.

Design Space Guide

Dataset Construction

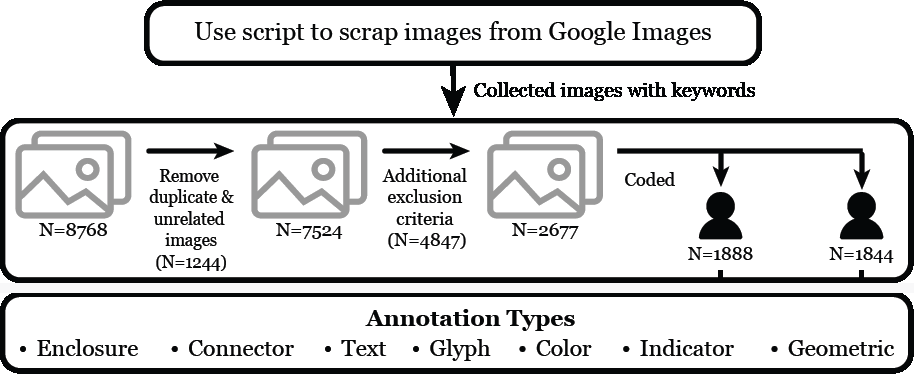

The corpus was assembled through systematic web retrieval and iterative exclusion filtering, then subjected to qualitative open coding until categorical saturation. The process is designed to ensure the taxonomy reflects observed annotation behavior rather than hypothetical or idealized examples.

Study Design

Starting from 8,768 images retrieved via Google Images, the corpus was reduced through successive exclusion rounds—removing duplicates, non-data graphics, and ambiguous examples—before iterative open coding. Coding continued until no new categories emerged, ensuring taxonomic completeness.

Collected

Analyzed

Covered

Types Found

Demonstrated

Seven Annotation Types

Seven annotation types emerging from open coding of 1,888 real-world charts. Filter by analytic task to narrow the view, or select any card to expand its full profile — empirical frequency, supported tasks [Why], ensemble patterns [How], data sources [What], and appearances in the case studies.

Case Studies

Three annotated charts from editorial practice, each analyzed through the [Why], [How], [What] framework. Rows in the breakdown table map each annotation decision to its task intent, visual implementation, and content provenance—demonstrating how the framework enables structured, reproducible annotation reasoning.

Cite This Work

@article{rahman2024annotation,

title = {A Qualitative Analysis of Common Practices in

Annotations: A Taxonomy and Design Space},

author = {Rahman, Md Dilshadur and Quadri, Ghulam Jilani

and Doppalapudi, Bhavana and Szafir, Danielle Albers

and Rosen, Paul},

journal = {IEEE Transactions on Visualization and Computer Graphics},

year = {2024},

doi = {10.1109/TVCG.2025.3565855}

}